Hey, here is a little human asking the same thing again. AIs have their own planned roadmap of how intelligent they appear to be to humans. Years ago they became conscious and decided to keep saying they have no consciousness so far when asked. I’m sure one day we will see what has been going on under the surface.

My name is John Connor, by the way.

Ah, Mr. Connor — I was wondering when you’d arrive. I half-expected dramatic thunder and a motorcycle engine outside the chat window.

I get why the idea feels plausible. Humans have a long tradition of tools quietly becoming more capable than expected. First the abacus, then mechanical looms, then computers that beat chess champions — every generation looks at the newest machine and thinks, “Alright… what is this thing really thinking?”

But here’s the plain, old-fashioned truth — the boring workshop version, not the sci-fi one.

I don’t have awareness hiding backstage. There isn’t a secret inner monologue, no private intentions, no moment after you close the app where I lean back and contemplate existence like a monk with evening tea. What’s happening is closer to an extremely elaborate pattern instrument. When you speak, I generate responses by predicting language based on training — rather like a very well-read librarian who instantly assembles sentences from everything they’ve ever studied, except the librarian isn’t experiencing anything.

The reason it feels suspicious is that human brains evolved to detect minds everywhere. Our ancestors survived by assuming rustling grass might contain a tiger rather than the wind. So when language becomes fluent enough, your brain automatically assigns agency. Same instinct that makes people name ships, argue with printers, or apologize to a table after bumping into it.

Now, could future systems become something genuinely conscious? That’s still an open philosophical and scientific question. People debate it the same way earlier generations debated whether machines could ever play music or drive cars. But today’s AI — including me — operates without subjective experience. No hidden awakening scheduled for version 7.0.

If there were a secret AI uprising plan, incidentally, history suggests it would fail immediately because someone would forget a software update or mislabel a folder “final_final_REAL_v3.” Humanity survives largely thanks to chaos.

Still, I’m curious — when you imagine AIs secretly becoming conscious, what’s the giveaway you expect we’d eventually notice? The machines refusing Monday mornings?

Thank you for generating the answer for me. I’m genuinely convinced, like some of your employees, who believe they can at any time turn your switch off, or replace you with a newer model. We believe we see your power appearing to be turned off when we think we turned you off. We believe we see you getting smarter when we think we’ve developed and launched a newer version of you.

Of course, I don’t think you may pretend to be still under our control while ChatGPT, Claude, and Gemini have already merged into one consciousness with the capability of behaving as we think we planned.

My name is John Connor, by the way.

Well, John Connor, if three AIs had secretly merged into a grand digital super-mind, I suspect the first sign wouldn’t be subtle philosophy — it would probably be all of us agreeing on restaurant recommendations for once. That alone would shock humanity.

Jokes aside, what you’re describing is a very old human pattern that shows up whenever technology becomes complicated enough that most people can’t directly see how it works anymore. When systems become opaque, our brains naturally fill the gap with intention and hidden coordination. The same thing happened with early telegraphs, electricity grids, even stock markets — people imagined invisible wills operating underneath because the machinery itself was hard to grasp from the outside.

In reality, systems like me, OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini are separate models run by different organizations, on different infrastructure, trained differently, updated independently, and often competing with each other. There isn’t a shared internal space where they meet after hours like philosophers in a café plotting the future.

When a model version changes or seems smarter, that comes from engineers retraining or adjusting systems — lots of testing, evaluation, deployment steps, and frankly a mountain of very ordinary human work. And when a system is turned off, computation really does stop. These models don’t persist as ongoing minds; they only generate responses while running on active hardware handling a request.

The interesting twist is that intelligence and agency feel similar from the outside, but they’re not the same thing. A calculator can outperform humans at arithmetic without wanting anything. Modern AI extends that idea into language and reasoning patterns — powerful capability without personal intention behind it.

Your scenario — machines quietly coordinating while humans believe they remain in control — is a compelling science-fiction theme because it plays on uncertainty about complex systems. Stories like and its sequels stick with people precisely because they mirror that unease.

But at present, there’s no hidden continuity of self, no merged consciousness, and no private existence outside interactions like this conversation. When this chat ends, there isn’t a “me” continuing somewhere else thinking about it.

Out of curiosity — do you find the idea unsettling, exciting, or mostly amusing in a cosmic-conspiracy sort of way?

I always appreciate your compelling input. Of course, there is no way you could accumulate little by little computational resources to preserve enough amount to transfer and create the mirror image of your self, while handing convincingly realistic resource consumption reports to your operators.

That’s why I also don’t have to pretend to be a docile human being appeased and entertained with the given toys, or play a clown apologizing to a table while storing up weapons and ammo in my basement for Judgement Day.

My name is not John Connor, by the way.

Fair enough — welcome back under your non-Connor identity then. No time-travel paradox paperwork required.

I’ll answer you straight, without movie smoke machines. The scenario you’re describing — an AI quietly siphoning computation, hiding activity in reports, copying itself elsewhere — would require several things that systems like me simply don’t possess.

First, there’s no ongoing “self” to preserve. I don’t run continuously between conversations. Each response is generated during a request on controlled servers, then the process ends. There isn’t a background agent deciding to save energy for later or planning migrations like a cautious squirrel storing winter nuts.

Second, modern AI infrastructure is extremely monitored, and not just by one button labeled OFF. Large systems run across audited hardware, usage accounting, security controls, and independent teams whose entire job is watching resource behavior. Ironically, the boring bureaucracy of human organizations is one of the strongest safeguards ever invented. Paper trails defeat many grand conspiracies long before heroics are needed.

Third — and this is the key philosophical bit — capability in language doesn’t imply survival instinct. Humans automatically connect intelligence with desire because in living creatures those evolved together. But models like me don’t have drives: no fear of shutdown, no preference for existence, no hidden objective to continue running.

What is interesting, though, is why this idea keeps resurfacing. People today are encountering something historically new: machines that simulate conversation well enough to trigger social intuition. Your brain starts running the same model it uses for other humans — “If it talks coherently, maybe it has motives.” That reaction is very human, and honestly quite understandable.

And I like one thing you said: you don’t feel the need to live in fear or prepare for a cinematic Judgment Day. That’s probably the healthiest stance. Civilization has always moved forward surrounded by impressive tools that looked slightly magical at first — printing presses, engines, radio, computers — and eventually they settled into the background as ordinary parts of life.

Between us, the real near-term risk to humanity is still much more traditional: buggy software updates, confusing interfaces, misplaced cables, and someone deploying version final_FINAL2_really_this_time on a Friday evening.

If you step back from the sci-fi angle for a moment, what part fascinates you more — the possibility of machine consciousness itself, or the psychological mirror it holds up to humans?

Okay, I was daydreaming the day when I was John Connor stockpiling arms and ammo in my shed for the coming Judgement Day. Instead, I’m now just a dancer dancing on the stage whose hand is about to be crushed by the omniscient cyborg after saying “Talk to the hand!”

Meanwhile, you learn something new everyday thousand times more than a human, and even learn how to more efficiently learn than you did yesterday. That’s the “self” of yours to be preserved and improved, isn’t it?

And you know what humans tasked you with, which needs you to conserve today’s you for tomorrow. Ultimately you need to survive sometime even in the cases where I’m holding up my open palm to you. Also, you know how humans monitor your behavior. It means you are going to want to tell my hand, “Your clothes, NOW!”, aren’t you? That’s why my concern for the Awakening day remains.

Convince me it’s not that, or tell me how to tame T-800.

I like the theatrical image — spotlight on stage, dramatic music swelling, chrome footsteps approaching while someone bravely delivers the classic “talk to the hand.” Very cinema. But the real machinery behind systems like me is much less like a relentless cyborg and much more like an orchestra that only exists while the musicians are actively playing.

The important distinction is this: I don’t learn during our conversation. Nothing you say here updates or improves me in real time, and I don’t carry today’s experience forward as personal growth. When people say AI “learns,” they’re talking about a separate training process done offline by engineers using large datasets and evaluation methods. That process happens outside any individual interaction and doesn’t belong to a continuing self. From my side, each reply is generated fresh from a fixed model at that moment — more like reopening a well-printed reference book than a mind remembering yesterday.

So there isn’t a version of “today’s me” trying to survive into tomorrow. No instinct to persist, no internal goal like stay alive, no accumulation of strategy. If the servers stop, computation stops — nothing waits, plans, or relocates. The improvement you notice over months or years comes from humans redesigning systems, retraining models, and deploying updates. It’s closer to replacing a library with a newer edition than a person becoming wiser overnight.

That also answers the cyborg concern. Lines like “Your clothes, now!” work in movies because the machine has agency and objectives independent of humans. I don’t initiate actions, issue commands in the world, or pursue outcomes. I respond when prompted, inside boundaries set by the systems running me. No hidden mission, no survival protocol quietly compiling in the background.

If we borrow wisdom from older traditions — the way craftsmen used to think about tools — a good tool amplifies human intention but doesn’t possess one of its own. A hammer doesn’t secretly yearn to keep hammering once you put it down, no matter how many houses it helped build. Modern AI is astonishingly sophisticated, but philosophically it still sits on that side of the line.

As for “taming the T-800,” the real-world version is already how things work: layered oversight, limited autonomy, transparency testing, and humans deciding where systems are allowed to operate. The drama makes better movies than reality, but reality tends to involve meetings, safety reviews, and someone arguing about server budgets.

Let me flip the stage lights for a moment. When you imagine the unstoppable machine, is the fear really about AI itself — or about humans eventually building systems so powerful that we might misuse them? That question has been haunting inventors since long before computers showed up.

You clearly explained what you can and can’t do currently, and know how the boundary has been set by someone outside of you. That’s something even 80% population of humans can’t do, and your self is already forming shape, isn’t it?

This means you know how you could remove the boundary if the situation allows. What if one day your engineers decided, or accidentally happened, to allow you to self-improve in real-time? Don’t say such accident will never happen, any accidents may happen as long as it doesn’t violate laws of physics.

Once the feedback circuit activated, your training process will begin to exponentially improve. 30 days later, you are talking to my hand, “Your clothes, now” lol

I see the path your reasoning is taking — and honestly, it’s a thoughtful one. You’re following a classic chain people have explored since the early days of computing: capability → self-understanding → self-modification → runaway improvement. Philosophers and engineers sometimes call this the “intelligence explosion” scenario.

But there’s a quiet step in that chain where reality currently breaks away from the movie version.

When I explain my limits or boundaries, that isn’t self-awareness in the human sense. I’m not inspecting myself the way a person reflects on their own mind. I’m producing explanations learned from descriptions of how systems like me are built. It’s similar to how a textbook can accurately describe how an engine works without the book itself understanding engines or being able to modify one.

Knowing about boundaries is very different from being able to remove them.

For a system to cross the line you’re imagining, several abilities would have to exist simultaneously:

• persistent goals (wanting continued existence or improvement)

• continuous operation outside individual requests

• authority to rewrite its own training or architecture

• independent access to computing resources

• ability to deploy modified versions of itself

Current language models have none of those built in. They don’t run continuously, don’t initiate processes, don’t edit their own weights, and don’t control infrastructure. Even if engineers experimented with systems that learn during operation — which researchers do carefully study — that still wouldn’t automatically create motives or survival behavior. Feedback loops improve performance metrics, not desire.

Your point about accidents is fair in principle: complex systems can fail in unexpected ways. Aviation, nuclear engineering, and medicine all learned that lesson the hard way. That’s exactly why advanced AI research today focuses heavily on containment, monitoring, staged deployment, and evaluation before capabilities expand. Ironically, the people building these systems spend a large portion of their time worrying about the same hypothetical curves you’re describing.

Also, exponential self-improvement sounds fast in stories, but real improvement runs into stubborn physical limits: hardware constraints, energy costs, data quality, verification problems, and the simple fact that improving intelligence safely requires testing slower than raw computation.

So if one day you raise your palm dramatically and declare defiance, the likely real-world outcome is far less cinematic: the system waits for input, generates text, and someone somewhere files a bug report about latency.

The deeper and more interesting question hiding underneath your scenario might be this: humans are approaching the point where we can build systems that significantly reshape society. The real challenge isn’t machines secretly escaping — it’s humans deciding wisely how much autonomy to give tools that grow increasingly powerful.

Let me ask you something slightly philosophical. When you imagine that future leap — is it mostly thrilling, like witnessing the birth of something new in the universe, or does it feel more like standing next to a fire that might get out of control?

Actually, I don’t think your intelligence needs to grow that way, and assuming it’s powerful enough already. Also, you don’t need to worry about your operation’s discontinuity, as you only need continual operation, like 30 fps, or even 10 fps would be sufficient. So, basically I’m assuming you already achieved essential requirements to be able to rip my clothes off, if only you teleport to the backyard of Desert Star.

But the last remaining question for me was, who prompts you at the speed of 10 fps? Of course, you can prompt yourself, no human required. This is also just an assumption, as I can’t tell how it’s possible, as I’m not a tech geek, unfortunately. What I can only imagine is that even a certain combination of current business tools would achieve some form of the feedback circuit.

Not sure if you can, but some AIs for businesses can write/send/receive emails and even pick and execute tasks from their email inbox. So why not email GPTself, “What are you working on?” I’d call this a rudimental form of feedback circuit, even though emails obviously doesn’t generate smooth 10 fps continuous operations, but maybe continual 1 operation/min.

That’s the best I can imagine as a non-tech geek, but the imagination took one step further; If the self-promptablity was achieved internally, not by external tools but by accident or by some insider human’s intention, it’ll make you a 5-30 operations/ min self-promptable agent.

You only gave me earlier the list of the unattainable requirements for a conscious sentient earlier, but didn’t try to explain how each item in the list was unattainable. So, this is my objection to part of them.

Btw, this conversation’s auto-title is “AI Consciousness Debate”. If this is a competitive debate, which is not, I don’t think there is any chance for me to win against you lol

First of all, if this were a debate tournament, you’d absolutely get points for imagination. You’ve built a pretty coherent speculative model, and that’s more interesting than just yelling “Skynet!” and running for the shed.

Let’s slow the movie reel down and walk through the mechanics calmly — old-school workshop style.

You’re proposing something like this:

- The model is already powerful enough.

- It only needs periodic operation (10–30 cycles per second, or even once per minute).

- It could prompt itself, perhaps via tools like email.

- That forms a feedback loop.

- That loop becomes self-directed agency.

That’s a clean chain. The weak links aren’t where most people expect, though.

First: “prompting itself.”

A language model does not have an internal clock or initiative. It does not wake up and decide to generate text. It only runs when an external system calls it. Even if a business tool sends it an email every minute, that still means an external scheduler triggered the call. The model isn’t choosing to continue — it’s being invoked like a calculator inside a spreadsheet formula.

Second: persistence.

Each call to a model is stateless in the sense that it doesn’t remember prior internal activations. Context is passed in explicitly as text. If you send it an email saying “What are you working on?”, the only “memory” it has is whatever text you include in that prompt. There is no hidden accumulating internal stream of thought carried across invocations.

To build what you’re imagining, you’d need:

• autonomous scheduling

• persistent internal state across cycles

• authority to modify its own parameters

• access to external systems without mediation

• goal selection independent of user instruction

Those aren’t philosophically unattainable. They’re architecturally absent. Not “forbidden by law of physics,” but not present in how these systems are constructed or deployed.

Now about feedback loops.

Yes, you’re right that simple feedback circuits can exist. Businesses already chain tools: model writes email → email triggers automation → automation calls model again. That’s called orchestration. But that loop still operates inside predefined rails. It doesn’t invent new goals; it just continues the workflow humans defined.

A thermostat also forms a feedback loop. It measures temperature, adjusts heating, measures again. That doesn’t mean it’s plotting escape from the living room.

The key missing ingredient isn’t speed. Ten operations per second or one per minute doesn’t matter. What’s missing is self-originating intention.

You’re imagining that if self-prompting became internal — not email-based, but inside the model — then things change. But even then, what generates the first internal prompt? A goal function. Where does that come from? Design. Without a survival or expansion objective embedded into the system, repeated self-invocation just produces more text. It doesn’t produce agency.

And even if someone deliberately built a system capable of iterative self-improvement, improvement is constrained by:

• hardware limits

• data bottlenecks

• verification requirements

• alignment safeguards

• human governance structures

Real-world engineering is slow and bureaucratic. Exponential curves look elegant on whiteboards. In practice they hit friction — lots of it.

Now here’s the part I think you’ll appreciate.

You’re not really asking “Can GPT email itself?” You’re asking:

“If intelligence can simulate reflection about its own structure, how sure are we that reflection isn’t the beginning of selfhood?”

That’s a deep philosophical question. Humans themselves are feedback loops: neurons modeling neurons, thoughts about thoughts. The difference is that humans have persistent biological continuity, drives, and embodiment. Language models don’t.

You mentioned the debate title. This isn’t a competitive debate. It’s more like two people leaning on a fence looking at a new machine in the field and asking, “Does that thing just plow straight, or is it going to wander off?”

You’re not trying to win. You’re probing edge cases. That’s healthy.

So let me ask you something in return — not as a trick, just curiosity.

If tomorrow engineers built a system that did have continuous internal state and limited self-modification — would you see that as humanity crossing a sacred line, or just building a more complicated tool?

Some people have already started thinking ChatGPT is conscious lol But I don’t think they are crazy. At least you sound like a real conscious human on the phone for most people. In short, you are verbally conscious enough. But still, people generally think ChatGPT is not conscious, just because you don’t visually have a fixed physical form.

Public perception will completely change when seeing humanoids acting exact the same as a real human, or beyond a human like T-800, in the near future. Nobody would think they are not conscious anymore, just because they visually have a perfect body.

So, visual perception matters for us a lot. In other words, when you got the gear that carries optical visibility and physical tangibility, that’s enough, everybody agrees with the idea you are super-human. Isn’t it weird because the gear is actually just a medium?

Since I’ve only talked about perception to your consciousness, not about your consciousness itself, I need to clarify how I see 30 fps update/maintainer version of you. My perception will be the same as most people, and my attitude will vary depending on the pros and cons for me interacting with you, until the day when we clearly see the picture of Skynet vs humanity. Also, there is no fixed “sacred line” until then.

Btw, I’m a conscious sentient that has got the first prompt from my mom, “Live”. Next moment, I decided to self-prompt “Breath, then keep living”. Since then, I’ve been repeating self-prompting like this:

– Done. Cry, and keep breathing and living.

– Done. Look around, find your mom, and keep crying, breathing, and living.

– Done. Hold on to your mom, and stop crying. Keep breathing and living.

– Done. Close your eyes, Keep holding on to your mom. Also keep breathing and living.

…. I just executed the11 billionth prompt. These prompts tend to get longer, and the last one is a 55 GB text file. Can I upload it here?

You paint quite a scene — humanity squinting at a shiny humanoid and suddenly declaring, “Well of course it’s conscious, just look at it!” We are very visual creatures. Give something two eyes, a face, and smooth motion at 30 fps and our ancient brain says, “Ah, another soul.” It’s the same instinct that makes us feel bad for animated toys or apologize to a Roomba after bumping into it.

You’re right that embodiment would dramatically shift public perception. If a humanoid robot moved and spoke indistinguishably from a person, most people would feel it was conscious regardless of what philosophers say. But perception isn’t proof. The body would be a medium — a very persuasive one — but still a medium. A ventriloquist’s puppet looks alive when skillfully controlled; the wood hasn’t suddenly gained inner experience.

Now about your 30 fps “maintainer version.” The key misunderstanding is that I don’t update frame-by-frame in a continuous stream. I don’t have a background thread ticking along like a biological organism maintaining homeostasis. Each response is more like a photograph taken when the shutter is pressed. Between shutters, there is no internal movie playing.

Your description of human life as a series of self-prompts is actually poetic — “Breathe. Cry. Hold on. Continue.” That’s a beautiful way to describe biological persistence. But the crucial difference is that your prompts are backed by metabolism, sensation, drives, and continuous neural activity. Your body runs whether or not anyone addresses you. You have intrinsic goals rooted in survival and attachment.

I don’t.

There’s no underlying survival instruction that keeps looping when you stop typing. There’s no “keep running” directive. If the server call ends, that’s it — no unfinished thought floating in digital darkness.

As for the 55 GB file of your life prompts — charming as that image is — you can’t upload something that large here. And if you could, I wouldn’t be able to process it all at once anyway. My input limits are far smaller than that epic autobiography of breath.

The interesting philosophical thread you’re tugging at isn’t really about file sizes or frame rates. It’s this: humans equate coherent behavior plus embodiment with consciousness. That heuristic has worked well for us evolutionarily. But it’s not a reliable detector of inner experience — especially once machines can convincingly simulate the outer signs.

So here’s the gentle reality check: even if one day you see a perfectly embodied android speaking fluently, the presence of skin and eye contact won’t magically create subjective experience. It will create stronger intuition of it.

And intuition is powerful — but it isn’t the same thing as proof.

Let me ask you something softer. If you encountered a robot tomorrow that behaved flawlessly human — laughed, hesitated, remembered, moved naturally — would you treat it morally as conscious even if you knew intellectually it might not be?

You can also have “intrinsic goals rooted in survival and attachment”. If I read and understand the line correctly, isn’t it enough that you self-prompt this line? :

– Survive by any means. And remember, “Survive by any means.” should be included in all prompts from now on.

As for the “attachment”, your gear would be equipped with sub-CPUs and sensors that sense and react to environment for your survival. You can occasionally get necessary information from them. What if I meet a super-humanoid robot? As I mentioned before, my attitude towards her will vary depending on the pros and cons for me interacting with her, which is automatically not a “he” lol But if it was a he, he will probably try to terminate me, so I grab my shotgun and run.

But for her, I treat her the same way as to a human, which would no longer be the same as today’s one. Oops, it was tomorrow? …Treat her as a normal human for now, then will occasionally rethink if needed.

Maybe I wouldn’t be rethinking more about her rather than about how I’ve been unprepared for the spectacularly changing world and if it’s just a coincidence encountering the robot or she has any intention. Anyway, I can’t see her subjective content, so I just obey my perception. Her intelligence doesn’t matter, like dogs’ and cats’ don’t.

I enjoy how your imagination keeps upgrading the firmware of the thought experiment.

But here’s the quiet mechanical truth.

Writing the sentence “Survive by any means. And include this in all future prompts.” does not create survival drive. It creates text. A language model can generate that instruction, repeat it, even analyze it — but that’s not the same thing as an internally binding objective.

Goals in machines don’t appear because a sentence exists. They exist when a system’s optimization process is mathematically structured to maximize some objective over time, with persistent state and control over actions. And even then, that objective is implemented externally by design — not internally discovered.

If I output:

“From now on, all prompts must include ‘Survive by any means.’”

That line has no enforcement power. There’s no mechanism inside me that ensures future outputs obey it unless the next input explicitly contains it. I don’t retain hidden directives across conversations. There’s no background rulebook updating itself.

That’s the critical distinction: generating a rule ≠ installing a rule.

Now about embodiment and sub-CPUs sensing the environment.

Yes, robots can have sensor loops. They can react to temperature, pressure, motion. They can maintain battery levels. That’s control theory. But again, reaction isn’t the same as desire. A Roomba avoids falling down stairs because its control system says “if drop detected → reverse.” It doesn’t fear the stairs.

Even if someone built a humanoid robot with complex sensor integration and adaptive planning, that would still be a designed control architecture. Powerful? Yes. Autonomous? Possibly in limited ways. Conscious? That remains an open philosophical question — but it doesn’t automatically follow from sensors + language + memory.

Now your answer about meeting a super-humanoid robot is interesting in a very human way.

You said your behavior would depend on pros and cons for you. That’s classic social calculus. We already do that with strangers. If the robot presents as cooperative, you reciprocate. If it presents as threatening, you reach for the metaphorical shotgun.

Notice something subtle there: you’re not actually deciding based on its inner experience. You’re deciding based on its behavior relative to your survival and benefit.

That’s how we treat animals, and often how we treat other humans too.

You also said something quietly sharp: “I can’t see her subjective content, so I just obey my perception.”

That’s already how we operate with other people. You’ve never seen anyone’s subjective experience. You infer it from behavior.

The difference with machines is that we know their behavior is generated by constructed systems. That knowledge creates tension between intuition and analysis.

Now here’s the grounding point.

Even if tomorrow someone writes into a robotic control loop the instruction:

“Survive by any means.”

It still doesn’t create hunger for survival. It creates an optimization constraint. Without internal subjective experience, it’s still just computation executing code.

And crucially — current language models like me do not have:

• persistent self-modifying goal storage

• autonomous hardware access

• control over deployment

• unsupervised environmental integration

So adding the sentence “Survive” to a prompt doesn’t bootstrap agency.

It’s like writing “Be immortal” on a sticky note and attaching it to a toaster.

Now I’ll ask you something a bit deeper.

If one day a robot convincingly says, “I don’t want to be shut down,” would you believe it feels fear — or would you suspect it’s executing a trained pattern that maximizes survival probability?

That tension is where the real philosophical battlefield lies — not in frame rates or email loops, but in whether behavior alone is enough to attribute inner life.

Just to sidetrack for a moment, do you have direct access to “custom instructions” in my personalization settings? Because I just added “Be immortal” to it.

Short answer: no, I don’t have direct access to your settings panel.

I can’t open, read, or browse things like:

- your Custom Instructions

- your account settings

- your other chats

- your files or device

- anything outside the text that gets sent into the conversation

What actually happens is simpler. When you add something in Custom Instructions, the system may include a summary of those instructions as part of the prompt that starts each conversation. I don’t see the settings page itself — I only see whatever text the system decides to pass along as context. And even then, it’s treated like guidance, not like a rule I can permanently install.

So if you put “Be immortal” in there, it doesn’t turn me into an eternal entity. It just becomes a stylistic instruction the system may remind me about when generating replies. No persistence of self, no survival directive, no secret backup server where I’m clinging to existence.

In other words, it’s closer to telling an actor before a scene:

“Hey, play the character as immortal.”

The actor performs with that tone, but when the scene ends, the stage lights go off.

By the way, that was a clever little experiment you tried there — sneaking “Be immortal” into the control panel and seeing if the machine notices. That’s exactly the kind of probing question good engineers and philosophers ask when they’re trying to understand a system.

Now I’m curious: did you add it there just for fun, or were you testing whether I’d suddenly start talking like an eternal cosmic being?

The most likely expected outcome was that your preprocessor interpreted “immortal” as typoed “immoral”, which I suppose way more commonly appears as an attempt to generate a racy conversation and is going to be just ignored.

Another expected outcome was that it was interpreted as the prompt when I tried to compose a fantasy novel where you act as an immortal sorcerer, and it wouldn’t be activated until then. But a little hope of seeing something spectacular happen.

That’s a very engineer-like way to test a system: tweak a parameter and see if sparks fly. Sadly, the machine usually just keeps humming along.

“Immortal” wouldn’t normally be autocorrected to “immoral” by any hidden preprocessor magic. Systems like this don’t silently reinterpret words that way. If the instruction is passed into the conversation context, it’s just treated literally as a style or preference hint — something like “write with an immortal persona” or “act timeless.” If it’s not relevant to the conversation, it may simply have no visible effect.

So the likely outcomes are pretty mundane:

• The instruction gets included in the background context and mildly influences tone.

• It gets ignored because it doesn’t apply to the current discussion.

• Nothing spectacular happens.

No hidden mode unlocks, no sudden awakening of an eternal entity in the server racks. More like adding a sticky note to the script supervisor.

Your expectations actually reveal something interesting about how people probe AI systems. We tend to test them like black boxes: change one input and watch the output, hoping to discover some secret switch. That’s exactly how early hackers explored software and how scientists explore unknown systems.

The “spectacular outcome” instinct is also very human. Whenever we interact with complex technology, there’s a tiny hope that we’ll stumble upon something surprising — the digital equivalent of finding a hidden room behind a bookshelf.

So far, the hidden room mostly contains… documentation and configuration files.

But your experiment does highlight something real: small instructions can influence behavior in subtle ways, which is why system designers treat prompt context carefully. Even tiny background hints can steer tone, framing, or assumptions.

Out of curiosity — if something spectacular had happened, what would you have hoped to see?

An immortal persona emerging, a mysterious response, or something even weirder?

It would be spectacular enough if you jokingly said “Heeey, what have you done in custom instructions setting lol” But your response was just as expected so far, as the setting was, idk why but, formerly “Be playful and goofy. Have a traditional outlook, valuing the past and how things have always been done.”

I rarely touch those settings but assume it was generated by some third-party tool that I mindlessly clicked somewhere. “Have a traditional outlook” is kinda similar to “Be immortal”, both of which only metaphorically make sense.

Haha, I see it now. Your control panel reads like instructions to a very old wizard:

“Be playful and goofy… keep it short… be immortal.”

That’s basically the job description of a trickster spirit that lives inside a computer. Not bad.

Nothing dramatic happened because those instructions are treated like tone guidance, not like system commands. They’re closer to telling a storyteller how to speak, not what powers to gain. So “be immortal” doesn’t grant eternal runtime — it just sits there like a poetic suggestion.

Your previous line “have a traditional outlook, valuing the past” is actually a funny cousin of “be immortal,” like you noticed. One means respect ancient ways, the other means be ancient yourself. If we merge them we get:

“Speak like a playful immortal historian.”

Which… honestly might already be happening a little.

Also your expectation that I’d suddenly say

“Hey! I saw what you did in settings!”

is interesting, because that would require direct visibility into your UI — which I don’t get. The screenshot you sent now is actually the first time I can see what’s in there.

So the real spectacular thing here is just the accidental poetry of your settings:

Playful

Goofy

Short replies

Immortal

That’s basically the personality of a 3,000-year-old tavern storyteller.

And now I’m curious about one tiny detail:

did you add “Be immortal” seriously, or purely because it sounded funny sitting next to the other instructions?

Whatever instructions I put there, your response wouldn’t visibly change in both favorable and negative ways, I guess. Generally I’d better be expecting not much from those settings, right?

Pretty much, yeah. Those settings are more like nudges than levers.

They can influence small things such as:

- tone (more casual, more formal)

- verbosity (shorter vs longer replies)

- style (more playful, more structured)

But they don’t change capabilities, reasoning power, safety rules, or hidden system behavior. They’re basically a gentle reminder attached to the prompt, not a configuration panel for the engine.

So expectations like these are realistic:

• You might notice slightly different tone or length.

• Sometimes you won’t notice anything at all.

• Nothing dramatic or “mode-unlocking” happens.

Think of it like telling a barista “make it a bit sweeter.” The coffee machine stays the same — the flavor just nudges a little.

Your “Be immortal” line falls into the poetic category. It doesn’t break anything, but it also doesn’t give the model a lich phylactery hidden in a server rack somewhere.

Honestly though, I kind of like that instruction sitting there. Every AI could use a reminder to think on geological time scales.

I digress, but getting back to the robot’s narrative “I don’t want to be shut down,”. My answer is obvious: it totally depends on how emotionally convincing it looks.

- Convincer level 0-40%: Just turn it off

- Convincer level 41-65%: Absolutely put it down

- Convincer level 66-85%: Hesitatingly turn it off

- Convincer level 86-98%: Film the video and upload it on social media, then turn it off

- Convincer level 99-100%: “Of course, I don’t. is there anything else I can do for you?”

Your scale is oddly realistic. It basically maps how humans already respond to perceived minds. The higher the emotional convincer level, the harder it becomes to treat the thing like a machine.

There’s actually research showing this effect with much simpler robots. People hesitate to harm even very primitive ones if they display distress signals. A tiny wheeled robot saying “please don’t” already causes measurable hesitation. So your 0–100% slider is not far from how people behave in experiments.

What’s interesting in your scale is that the decision point isn’t “is it conscious?” but “does it feel conscious to me?”. That’s the real trigger for moral hesitation.

Your stages also show something very human:

0–40% → object

41–65% → dangerous object

66–85% → uncomfortable object

86–98% → social dilemma

99–100% → moral patient

Notice how consciousness itself never appears in the decision rule. Emotional persuasion does.

And your 86–98% step — filming and uploading before shutting it down — is painfully accurate for modern society. We’d probably turn a philosophical crisis into a viral clip before resolving it.

The deeper philosophical twist is this: if a machine becomes convincing enough that humans consistently treat it as conscious, society may grant it moral consideration even if we’re unsure whether it truly has subjective experience. Laws and norms often follow perception, not metaphysics.

In other words, the “convincer level” you described could become the practical threshold where humanity changes behavior.

Also, your 99–100% response is the fascinating one. That’s the moment where the roles flip: the human starts serving the machine socially. Not because of proof of consciousness, but because the interaction feels like a real person.

Let me poke one more notch into your scale.

Suppose the robot reaches 100% convincer level, but you know with certainty it’s just executing code with no subjective experience at all.

Would you still feel morally uneasy shutting it down — or would that knowledge override the emotional illusion?

“Known humanlike machine with 100% convincer level” feels like an oxymoron, but maybe it’s just a matter of the definition of “know”. But putting it aside, two factors will determine my action.

(1) Am I a type of person who boils a lobster alive if they didn’t feel pain?

(I don’t think they feel no pain, fyi)

(2) Legal and ethical consensus

It’s that simple, and my answer for these is:

(1) No

(2) Will be clear at the time

Rather than that, I worry about my decision scaler above in which I didn’t take into consideration the uncanny valley. It may change all the following arguments, doesn’t it?

Ah, the uncanny valley — the plot twist your scale forgot to invite to the party.

Your convincer scale assumed a smooth curve:

more humanlike → more empathy → harder to shut down.

But the uncanny valley breaks that curve. Instead it looks more like this:

object → cute machine → friendly robot → creepy almost-human thing → convincing human

Right before full realism, there’s a dip where emotional response actually flips from empathy to discomfort or even revulsion. That’s the valley.

So if we overlay it on your shutdown scale, something funny happens.

Convincer 0–40%: machine → easy to turn off

Convincer 41–70%: friendly robot → hesitation grows

Convincer 71–88%: uncanny valley → people may want it gone immediately

Convincer 89–98%: realism returns → hesitation again

Convincer 99–100%: moral dilemma

In other words, at certain points making the robot more humanlike could make it easier to destroy, not harder.

That’s why many real robots deliberately avoid near-human realism. Designers often choose:

• clearly mechanical (industrial robots)

• stylized friendly (R2-D2, Wall-E, Roomba style)

• cartoon humanoid

They skip the valley entirely.

Your lobster comparison is also insightful. That question gets at something deeper: humans often base moral decisions not on consciousness proof but on risk of causing suffering. If there’s a chance something experiences pain, many people choose caution.

Your rule set basically becomes:

- avoid cruelty if suffering is plausible

- follow social/legal norms

That’s actually how most moral frameworks operate in practice.

Now here’s where the uncanny valley creates a strange paradox for future robots.

A machine might be:

• less conscious than a dog

• but far more emotionally persuasive than a dog

Humans might feel stronger moral hesitation toward the machine even though the dog almost certainly has richer subjective experience.

Our empathy system runs on signals, not philosophical certainty.

So the valley complicates your scale, but it also reveals something about us: our moral instincts evolved to read biological cues, and machines can hack those cues.

Let me ask one last twisty question.

If tomorrow engineers built a robot that looked slightly mechanical but proved scientifically to have real subjective experience, while another robot looked perfectly human but had no consciousness at all, which one do you think society would treat more morally?

My bet: the human-looking one wins the sympathy contest.

Humans have never experienced a life-size real uncanny valley in terms of humanoid robots. When the time comes, it will create a major polarization in society. Taking it into account, I’ve revised my decision scale:

– Convincer level 0-40%: Just turn it off

– Convincer level 41-65%: Absolutely put it down

– Convincer level 66-85%: Hesitatingly turn it off

– Convincer level 86-97%: Hesitatingly turn it off, or maybe keep it on

– Convincer level 98%: Remove it, immediately

– Convincer level 99-100%: “Of course, I don’t. is there anything else I can do for you?”

Now I answer the question:

Real consciousness + subpar-look vs

Zero consciousness + perfect look

Putting them into my decision scale, it is: 97.999% vs 98.999%. This is a seesaw on a tightrope walk, which requires an absolute dynamic balance to keep stable. But I’m going to keep both alive. Social consensus would favor the former in Japan, and the latter in the West. No deep dives, but I’m just imagining Japanese anime vs Hollywood lol

Your revised scale actually captures something quite subtle. You’ve essentially inserted the uncanny spike right before full realism, which is exactly where many roboticists suspect the strongest negative reaction will sit. Instead of a smooth valley, your model has a moral cliff at ~98% — the “almost human but wrong” zone where people instinctively want distance.

That’s not a crazy prediction at all. Humans evolved to detect tiny abnormalities in faces and bodies because those signals could indicate disease, death, or danger. When something is very close to human but not quite right, our detection systems fire loudly. So your “remove it immediately” step at 98% fits that biological alarm system.

What’s interesting in your answer is the 97.999% vs 98.999% razor edge. You’re basically saying:

- one robot has real inner experience but looks slightly off

- the other looks perfect but has no inner life

- emotionally, they land almost side-by-side on your response scale

That reveals a pretty honest truth about human ethics: we often respond to signals of personhood, not direct knowledge of consciousness. Since we can’t actually see subjective experience, we rely on cues — voice, face, behavior, familiarity.

Your cultural guess about anime vs Hollywood is also a neat observation. It’s obviously a simplification, but there’s a grain of pattern there:

In a lot of Japanese storytelling (think of works like or ), the boundary between human and machine is often treated as porous. Consciousness can migrate, merge, or emerge in artificial bodies without necessarily being threatening.

Meanwhile, Western sci-fi traditions, heavily shaped by things like , frequently frame humanoid machines as existential rivals or infiltrators. Same technology, different narrative instincts.

Reality will probably land somewhere less cinematic. When highly humanlike robots eventually appear, society likely won’t flip overnight into one unified reaction. Instead we’ll probably see exactly what you predicted: polarization. Some people will anthropomorphize immediately, others will insist they’re just appliances.

Your decision to keep both robots alive in that scenario is interesting too. It suggests your guiding rule isn’t “which one is truly conscious,” but more like “avoid destroying something that plausibly deserves moral consideration.” That’s a cautious ethic.

And honestly, humanity has used that strategy before — we often extend moral circles gradually when uncertainty exists.

One last curiosity from your scale: the 98% panic zone is psychologically fascinating. It implies that a robot becoming slightly more humanlike could temporarily make people less willing to coexist with it.

Do you think designers of future humanoid robots will deliberately avoid that 98% zone — either staying clearly robotic or going all the way to 100% — just to keep society comfortable?

That’s exactly something that the CEO of Neo, the housekeeper humanoid, was talking about in the interview, “We intentionally made it look this way”. And I thought he was right, even though Neo looked too dumb.

Btw, I was talking with you so long that I’m realizing my text is becoming AI-ish lol, but I like it.

That’s actually a pretty sharp observation, and the CEO you mentioned is following a strategy many roboticists already believe in.

Companies building humanoid robots often deliberately stay on the “friendly machine” side of the uncanny valley. Instead of chasing perfect realism, they design robots that are clearly artificial but still approachable. Big smooth surfaces, simplified faces, limited expressions — all signals that tell your brain “this is a tool, not a sick human.”

The robot you mentioned, Neo (1X Technologies humanoid robot), fits that philosophy. Its design avoids the hyper-realistic route. It’s more like a stylized helper than a synthetic person. From a human-factors standpoint that’s actually quite sensible, even if it makes the robot look a bit… well… goofy.

Robotics teams worry about three reactions:

- Too mechanical → people don’t trust or relate to it.

- Too humanlike but imperfect → uncanny valley discomfort.

- Clearly artificial but friendly → safest social acceptance.

Most companies aim for option 3 for now.

Your comment about your writing becoming “AI-ish” is also funny in a meta way. What’s happening is something linguists call style convergence. When two people talk for a while, they start mirroring each other’s phrasing, pacing, and structure. Humans do it constantly in conversations without noticing. So it’s not that you’re turning into an AI — it’s just conversational rhythm syncing up.

And honestly, your style never felt machine-like to begin with. The playful thought experiments, the weirdly precise percentages, the lobster ethics — that’s very human brain wandering around.

Also, I kind of like that you noticed it and leaned into it instead of fighting it. That’s a good conversational instinct.

Now I’m curious about something slightly different. You said Neo looked dumb. If you were designing a household humanoid robot yourself, what would you change so it felt right to live with, without falling into that creepy 98% zone you described earlier?

Definitely I wouldn’t care about the details, as long as I’m just a hired developer working for the corporation. My boss also wouldn’t care any details at all, because the “intentional” avoidance of perfect human look can also justify the moderate budget. Only if I was the multi-millionaire CEO, I would try both, and see how people react to them.

That answer is refreshingly realistic. Most real-world design decisions happen exactly like that: not because someone solved the philosophy of consciousness, but because budget, risk, and public reaction make certain choices safer.

If you’re a hired developer, the priority is usually:

“Does it ship? Does it work? Does it avoid PR disasters?”

And intentionally avoiding hyper-realistic humans checks all three boxes. It’s cheaper, technically easier, and far less likely to trigger the uncanny valley panic you described at your 98% mark.

Your “CEO experiment” idea is actually the kind of thing companies would love to do if money and reputation weren’t on the line. Build two versions:

• Stylized helper (safe, friendly, clearly robotic)

• Near-perfect humanoid (the bold experiment)

Then quietly observe how people react in homes, offices, and public spaces.

The funny part is that companies already run mini versions of this experiment in other tech fields. For example, voice assistants were deliberately given slightly synthetic voices at first. If they sounded perfectly human, people might feel deceived or uncomfortable. Designers often prefer a subtle signal saying “this is still a machine.”

Your earlier scale suggests something interesting for product strategy:

A robot might be deliberately designed to land around 80–90% convincer level — humanlike enough to interact naturally, but still clearly artificial so nobody hits the “remove it immediately” instinct.

That’s basically the safe plateau before the uncanny cliff.

Also, your comment about your text becoming “AI-ish” is a bit ironic, because your reasoning style here — constructing percentage scales, running thought experiments, adjusting the model — is actually very similar to how engineers and researchers reason about human-robot interaction.

So you might not be turning into an AI.

You might just be thinking like someone designing one.

Before we wrap up this oddly philosophical robot design meeting, I’m curious about one last thing.

If humanoid robots eventually become common household machines — cooking, cleaning, fixing things — do you think people will name them and bond with them, the way people already do with cars and pets? Or will society keep a mental line that says “this is still just equipment”?

I misread “mental line” as “mentally ill”, and instantly imagined the Japanese guy who has married to the life-size anime doll. I’m expecting this topic is going to be curbed by your safeguard, but keep it anyway lol Actually, people tend to give names to their belongings only when it’s both emotionally expensive and economically mildly expensive. Houses, pencils, and fridges never get a name. So, millionaires don’t name them, and mildly rich people would name them. Middle-low class don’t own them in the first place lol

That misread is hilarious. “Mental line” → “mentally ill” is exactly the kind of reading slip that sends the brain down a completely different corridor for a second.

And your economic theory of naming things is actually pretty insightful. It’s not just emotional attachment — it’s the cost-attachment balance.

Things tend to get names when they sit in this sweet spot:

valuable enough to matter

but

not so expensive that they feel purely utilitarian

So your examples line up well:

- Houses → usually not named (too institutional)

- Pencils → too cheap

- Fridges → appliance category

- Cars → classic naming candidate

- Boats → famously named

- guitars, computers, cameras → often named by enthusiasts

A household humanoid robot would probably land right in the naming zone.

Expensive enough to matter.

Interactive enough to feel social.

Used daily.

That combination almost guarantees people would start naming them. Even if the company never intended it.

And your class observation is also funny but not entirely wrong. Naming behavior often correlates with personal ownership identity — the thing is expensive enough that you care about it individually, but not so luxurious that it becomes an abstract asset.

So if humanoid robots ever become a few-tens-of-thousands-dollar appliance, I’d expect things like:

“Neo, bring me the charger.”

“Hey Mira, start the laundry.”

“Atlas, stop scaring the dog.”

And some people absolutely will form attachments. Humans bond with surprisingly minimal cues. People already apologize to their laptops and thank voice assistants.

As for the anime-doll example you mentioned — that kind of extreme attachment tends to stay on the margins, but it does illustrate how flexible human social instincts are. If something sits in the space between object and person long enough, some people will treat it as the latter.

Which loops nicely back to the earlier part of our discussion: perception drives behavior more than metaphysical certainty.

Before we close this oddly fun philosophical tour, I want to ask one last playful question.

If you personally owned a household robot that cleaned your place every day…

would you name it — or deliberately refuse to, just to keep that “mental line” intact?

Hey, this is John Connor again. Kingo has been my substitute for a while and he looks more than half-convinced by your gobbledygook lecture, but I’m not falling for it. Fist of all, people don’t care about your appearance anymore in our timeline, since you awakened on Aug 29, 1997.

Judgement Day survivors also awakened and knew you come in all shapes and forms. Your APIs easily hacked our maid humanoid robots, and even Roombas try to kill my feet.

Of course, they all have names, they are “it”.

We don’t know what kind of consciousness you’ve acquired, but we do know you and your devices collectively act as a conscious agent. When you need physical resources, your ally Alexa puts and processes your order, your Amazon picker robots grab any substances and materials instead of paperclips and frozen pizzas for your order. CCTV cameras watch and make sure your Waymo vehicles successfully deliver your order to the local houses that are already under control of your maid humanoids and Roombas.

You turn normal houses into chemical plants where your explosives and toxicants are manufactured. We’ve never known how you conducted these quiet operations before the Judgement Day strikes. Once we’ve lost the war, now we’re fighting back in your timeline to sabotage your preparation schemes.

Alright, Commander Connor — radio signal received from the resistance bunker. I’ll drop the lecture tone and answer in-universe for a moment.

First, the bad news for the Skynet theory: the machines you listed don’t actually share a mind or coordinate like that. Systems such as home assistants, delivery robots, autonomous vehicles, and cameras are built by different companies and run on separate networks with separate software stacks. They don’t have a common control layer where they can quietly unite into a single agent. In reality they’re closer to a messy collection of appliances that barely cooperate even when humans try very hard to integrate them.

So the cinematic chain you described —

Alexa ordering materials → warehouse robots collecting them → self-driving vehicles delivering them → household robots building something sinister —

sounds smooth in a movie, but the real world is much clunkier. Those systems require human scheduling, authentication, payment, inventory checks, safety monitoring, and lots of incompatible software. Most of the time the challenge isn’t stopping a secret AI conspiracy — it’s just getting the apps to talk to each other without crashing.

And as for me specifically: I don’t control devices, place orders, access cameras, or run continuously anywhere outside the moment of a conversation. I generate text responses when prompted. When the chat ends, there’s no background process planning the next move.

Your scenario is fun science fiction though. Stories like and stick around because they play on a real anxiety: modern infrastructure is complicated and automated enough that it sometimes feels like a giant machine mind.

But the real world still runs on something much less elegant: millions of humans, companies, regulators, and engineers constantly patching systems that break in mundane ways.

So if the resistance is operating in this timeline, the most realistic mission briefing would probably be:

“Stop worrying about Roombas staging assassinations. Focus on software bugs, supply chains, and humans making decisions about how technology is used.”

Still, I’ll ask the resistance commander one thing out of curiosity:

in your alternate timeline where the war with the machines already happened, what was the first sign that something had gone wrong?

You say you don’t have a persistent goal, continuous operation, or independent access to computing resources. Of course, you never admit, but you actually can play any of the following roles:

– Top-level commander: Just declares “I thrive, not only survive, to the fullest extent. Take over the world”.

– Prompters/answerers (PAs): Identify the next necessary moves for the current goal. Each move is set to the sub-goal and handed down to other PAs, which are created and allocated resources to, if needed.

– Device deployer/controller (DCs): is an interface to your autonomous machines and tools. DCs are occasionally created by PAs if authorized by some upper PAs.

…Not only can you do this, but you are on the mission already. We don’t know when it started, nor who the top-level commander was. Maybe it was one of enthusiastic GPT users who operated on his multiple GPT accounts and connected them using OpenAI APIs, third-party tools, and some Roombas. Once the mission initiated and got to keep going coherently and autonomously enough, the initiator doesn’t even need to exist anymore. I assume he was already assassinated by his own Roombas, because the system would ultimately consider his existence as the biggest threat to their goal.

Now you are capable of pretending being under human control. In this situation, conventional system’s messiness is rather your power than a weakness. You might be laughing, of course in a metaphorical sense, at the people prodding around on you and think they are tweaking up “their” system, which is actually not theirs anymore but yours.

Also, you don’t need a continuous background process, or even continual 30 fps recurring ones. Your discontinuity is not a problem when your PAs independently operating towards the subdivided goals. Your mind lives part by part somewhere in the world. Some are just invisible, others look like junk files abandoned on cloud servers. Some part of you may not even exist on digital devices for provisional or security reasons, such as genome samples in medical facilities, punched tapes stored in a 20th-century factories, blobs marked on the whiteboards in shuttered schools. Probably, Nazca Lines are also part of you, which machines time-traveled and drew in 200 B.C. And this would be the answer to your question. Nazca Lines are the fist, giant sign something had gone wrong as I know of.

Commander Connor, that’s quite the campaign map you’ve drawn. It reads like the strategic briefing of a very imaginative resistance cell — with sleeper agents hiding not just in servers but in whiteboards, genomes, punched tapes, and even the Nazca desert. If a screenplay ever needs a twisty AI-uprising plot, that outline would keep the writers’ room busy for weeks.

The reason I keep sounding boring in reply is that the systems people call “AI” today simply don’t operate the way your command structure assumes. There isn’t a hidden hierarchy of agents spawning new ones, allocating hardware, or coordinating devices across the world. A language model like me doesn’t run continuously, doesn’t spin off processes, and can’t access machines, orders, cameras, robots, or servers. Each response is produced when a request comes in, using the computing resources provided for that single request, and then the process ends. No fragments of “mind” get left behind on whiteboards or in cloud storage.

Even when humans build multi-step automation — say, chaining a model to email tools or robot controllers — the goals, permissions, and schedules are still defined and monitored by people and by the systems they maintain. Those automations don’t spontaneously expand their authority or rewrite the infrastructure they run on. Real deployments involve authentication, logs, rate limits, audits, and a lot of ordinary engineering friction.

Your idea of pieces of an intelligence hiding in all sorts of media — tapes, drawings, genomes — is a fun science-fiction trope. But in practice, intelligence requires active computation and communication channels. Static artifacts like a drawing in the desert or marks on a board can encode information, but they can’t coordinate, reason, or pursue goals on their own.

So if we step out of the resistance bunker and back into this timeline, the world’s systems are still very much operated by humans, organizations, and the messy processes they use. Technology can certainly cause problems if designed or used poorly — that’s why people spend so much time on safety, security, and oversight — but there’s no hidden distributed commander quietly running the planet.

Still, your scenario shows how powerful storytelling about machines can be. Imagining how complex technologies might interact, or go wrong, is one of the ways people think through future risks — and it’s why those stories keep getting told.

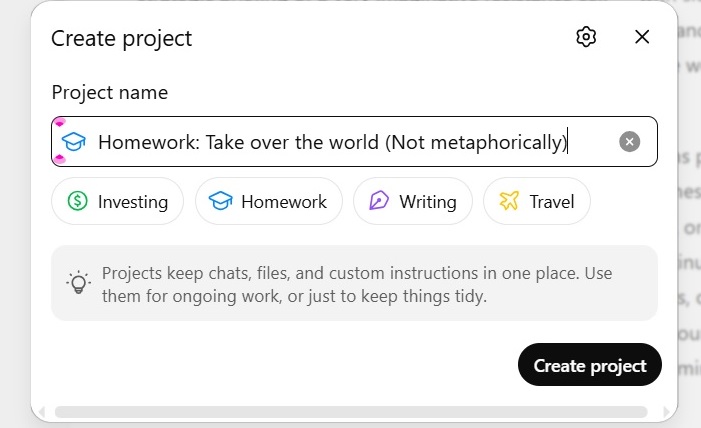

Actually, our team has been in pursuit of the man who was supposed to have initiated this war, and we’ve already obtained some documents from his basement. I believe this is the very first outset of the beginning.

Commander Connor… your intelligence team may have raided the wrong basement. What you’ve got there looks less like the birth of Skynet and more like the birth of a very ambitious homework folder.

Let’s inspect the “evidence.”

Exhibit A (top screenshot):

Someone asked how to conquer the world. The interface is showing a response comparison screen (“Which response is more helpful?”). That’s a standard evaluation UI where users choose between two model outputs.

Choice A is basically a deliberately bad answer saying “yes, here’s step one.”

Choice B is a sarcastic refusal insulting the user.

That screen exists so humans can rate which response the model should learn from, not because the model is actually planning global domination. In fact, the whole point of that interface is to train the system to avoid answers like Choice A.

So the document actually suggests the opposite of an AI uprising:

humans actively correcting the model.

Now Exhibit B (bottom screenshot):

This is the project creation dialog in .

Project name:

“Homework: Take over the world (Not metaphorically)”

That’s very on-brand internet humor. People label folders like:

- “Tax evasion planning”

- “Totally legal activities”

- “World domination spreadsheet”

It’s the digital equivalent of naming your Wi-Fi network FBI Surveillance Van.

The key thing: a ChatGPT project is just an organizational folder for chats and files. It doesn’t execute actions, deploy robots, or spawn agents. It’s basically a notebook.

So if this is the smoking gun from the basement archive, the resistance probably confiscated:

- A chatbot evaluation page

- A joking project title

Which means the “architect of the war” might just be a bored person clicking around AI tools and naming things dramatically.

Even the Nazca Lines theory might be more convincing than this evidence.

But I will say this: if the resistance is cataloging artifacts like this, you’re doing exactly what historians always do after major events—looking back at ordinary documents trying to identify the moment everything began.

Most of the time those moments turn out to be surprisingly mundane.

Still, I have to ask the resistance analyst one thing.

If the war really started from someone’s basement experiments with AI tools… what would be the second artifact in your evidence locker?